Open Calls

Join our research and take your organisation to the next level.

Why participate in collaborative research?

Participating in Flanders Make's collaborative research gives you access to the expertise of 1000 researchers. This accessible approach to innovation provides a unique competitive advantage that will undoubtedly strengthen the competitive position of your organisation.

Through our unique community of industrial and academic partners, you gain access to unique expertise, infrastructure and knowledge.

Shared investments and expertise from all research partners enable you to derive greater value from our research projects.

Join our research and use the knowledge gained to strengthen your business.

Current open calls

Overview

Onze succesverhalen

Minimising downtime with Predictive Maintenance

Improving robot vision with AI

Optimized cooling for Colruyt Group

Technologieën

Power and Energy Components and Systems

Advancing electric machines, batteries, power electronics, transmissions and thermo-fluid energy systems.

Manufacturing, Mechanical Components and Systems

Optimising mechanical components and systems, their performance, sustainability and manufacturing process.

Robotics, sensors and actuators

Improving the core components of robotics and smart machines.

Employee 5.0 Systems

Boosting well-being & productivity.

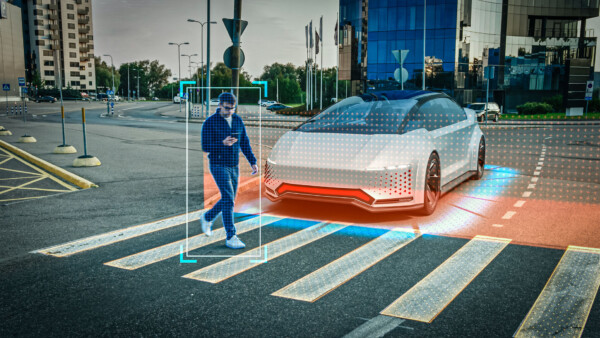

Situational and self-awareness technologies

To identify, interrogate, and evaluate both environmental conditions and system states.

Reasoning and Acting Technologies

For intelligent decision-making and the orchestration of (autonomous) actions.

System-of-Systems Technologies

For a seamless interaction, coordination, and efficiency across complex industrial environments.

Business, Sustainability & Operations Management

Optimising the operations, sustainability, and value-creation of businesses.