Using prompts to manage robots: flexible bin picking without retraining

What if you could manage your robots using textual or visual prompts?

In applications such as bin picking, pick-and-place and depalletising, vision systems are essential for object detection. Currently, this usually requires intensive training for each product type. That makes it slow and difficult to scale.

With prompt-based segmentation models, you take a different approach. You give simple instructions such as “metal object” or point to an object in an image — and the robot can get to work straight away.

In this blog, we show how foundational algorithms make this possible, and what that means in practical terms for your production environment

A solution for product variation and long set-up times

In production environments with a wide range of products, traditional vision systems quickly reach their limits. For every new product, you have to collect data and retrain models. Even with synthetic data, set-up times can quickly mount up. In a production environment with a large variety of products flexibility is necessary to make robotic solutions cost-effective.

Foundational Models are AI models trained on large and diverse datasets. This enables vision systems to operate quickly in new situations without the need for retraining.

One example is the well-known SAM3 model (Segment Anything Model), which detects and segments objects in images. You control the model using a textual description or by visually pointing to an object. This makes a huge difference in practice. Instead of extensive training, you can configure a new product in about one minute. At the same time, the approach remains intuitive and usable without in-depth AI knowledge.

Prompt – Detection – Picking: A demonstration

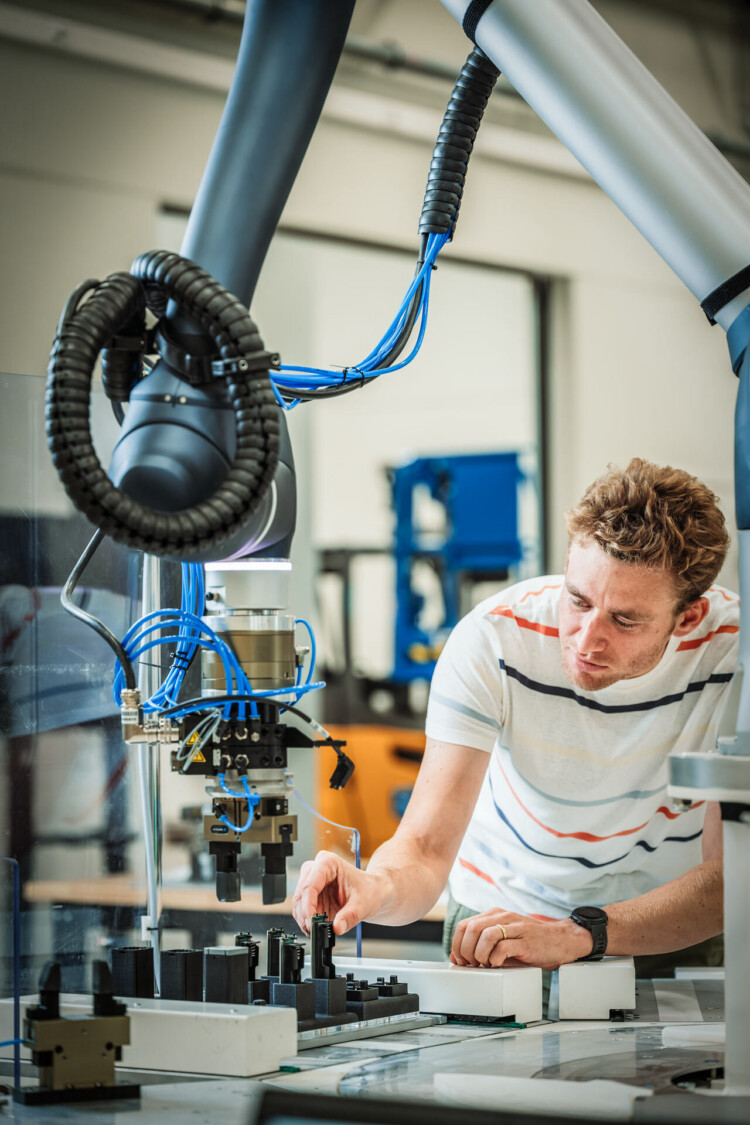

We developed a demonstrator in which we use the SAM3 model to quickly locate unseen products in an image. We combine this with a 3D camera; using the depth information, the correct gripping position can be determined. This allows a robot to detect and pick up even unknown objects without prior training.

era, aan de hand van de diepte-informatie kan de correcte grijppositie bepaald worden. Zo kan een robot ook onbekende objecten detecteren en oppakken zonder voorafgaande training.

Try it out in your production

Foundational models enable vision systems to be deployed quickly in new situations, which is ideal for dynamic production environments. When product variation is high and object positions are not fixed (bin picking or pick-and-place), this approach offers significant added value. By working with prompts instead of training:

- you significantly reduce setup time

- you increase the flexibility of your robot applications

- you make automation feasible for a wider product range

You can try SAM3 online for free with your own images and videos. Depending on your specific use case, other foundational models may be relevant for your vision systems. These include, amongst others: CNOS (requires a CAD model), MUSE (works with 2D reference images), CLIPseg and DINO-X / Grounding DINO. For advice and support on getting started with these models, or for more information on vision and robotics, please contact us and/or follow us via the VIRAL project.

Prompting for your business case?

Sales Manager